Table of Contents >> Show >> Hide

- Why SEO Testing Matters More Than Ever

- What an SEO Test Really Is

- The Biggest Reason Most SEO Tests Fail

- How to Run Your Own SEO Tests the Right Way

- 1. Start with a real hypothesis

- 2. Choose pages that are genuinely comparable

- 3. Change one core variable at a time

- 4. Decide which metrics actually matter

- 5. Establish a baseline before you touch anything

- 6. Roll out carefully and QA like a maniac

- 7. Let the test run long enough to gather signal

- 8. Analyze the outcome honestly

- What You Should Test First

- Common SEO Testing Mistakes to Avoid

- How to Present Test Results to Stakeholders

- The Long-Term Payoff of Building a Testing Culture

- Experiences from the Real World of SEO Testing

- Conclusion

SEO is full of confident advice. Change your title tags. Add more internal links. Trim your URLs. Rewrite your headers. Add structured data. Remove structured data. Stand on one foot during a core update and whisper sweet nothings to Googlebot. Everyone seems to have a “proven” tactic. The problem is that search is messy, competitive, and constantly moving. What worked for one site, in one niche, on one template, during one season, may do absolutely nothing for yours.

That is exactly why running your own SEO tests matters. Instead of treating every blog post, conference talk, or LinkedIn proclamation like holy scripture, smart SEOs test changes on their own websites, in their own environment, against their own goals. It is the difference between following a recipe and actually tasting the soup.

When you test properly, you stop guessing. You learn which changes lift clicks, which improvements help indexing, which adjustments boost visibility, and which ideas belong in the digital junk drawer next to “keyword density targets” and “just add more H2s.” Even better, testing gives you evidence. That evidence helps you win internal buy-in, protect traffic, and avoid rolling out sitewide changes that look brilliant in a slide deck but flop in the real world.

Why SEO Testing Matters More Than Ever

Search engines have become better at understanding intent, structure, page quality, and user expectations. That is good news for helpful websites and bad news for lazy assumptions. A tactic that improves one class of pages may hurt another. A test that lifts product pages may do nothing for blog posts. A clever title rewrite might improve click-through rate on one template but tank it on another because it cuts off the wrong words.

SEO testing matters because it helps you answer questions that generic best-practice lists cannot:

- Do shorter title tags improve clicks for your category pages?

- Do stronger internal links help deeper pages get crawled and discovered faster?

- Does moving key content from JavaScript-rendered modules into HTML improve indexation or traffic?

- Does adding FAQ-style supporting copy actually help, or just make the page feel like it ate a thesaurus?

- Does structured data improve appearance and click behavior for this page type, or does it just give your dev team another ticket to ignore?

In other words, testing turns SEO from a belief system into a decision-making system. And that is a much healthier religion.

What an SEO Test Really Is

An SEO test is a structured experiment that measures the effect of a change on organic search performance. In plain English, you change something on a set of pages, compare the results against a control group, and watch whether the variant performs better, worse, or not differently enough to matter.

That sounds simple, but the details matter. SEO testing is not the same as classic CRO A/B testing. In CRO, different visitors can see different versions of a page at the same time. In SEO, search engines need a consistent version they can crawl, render, index, and rank. That means your test design must respect crawling, canonicalization, redirects, and indexing behavior.

Good SEO tests usually focus on templated or similar pages. Think ecommerce category pages, product detail pages, location pages, listings, glossary entries, or blog posts that follow a common structure. When pages share similar intent and architecture, it becomes easier to isolate the variable you changed and judge the impact more fairly.

The Biggest Reason Most SEO Tests Fail

The biggest reason SEO tests fail is not bad math. It is bad discipline.

Teams often change too many things at once. They tweak title tags, rewrite intros, change internal links, compress images, move modules, update schema, and then declare victory because traffic went up three weeks later. That is not a test. That is digital soup.

Other teams pick the wrong pages. They mix branded and non-branded queries, combine informational and commercial intent, or compare pages with wildly different traffic baselines. That creates noisy data and fuzzy conclusions. And fuzzy conclusions are how bad ideas get promoted.

The fix is wonderfully unsexy: test one meaningful variable, on comparable pages, for a clear business reason, with a control group that gives you something solid to compare against.

How to Run Your Own SEO Tests the Right Way

1. Start with a real hypothesis

Do not begin with, “Let’s test something.” Begin with, “We believe this change will improve this metric for this kind of page because of this reason.” A real hypothesis keeps the team focused and prevents you from wandering into a swamp of vanity metrics.

For example: “We believe rewriting title tags on category pages to lead with the primary query and product modifier will improve organic CTR without hurting rankings.” That is a testable idea. “Let’s make the titles more SEO-y” is not.

2. Choose pages that are genuinely comparable

Group together pages with similar purpose, structure, and demand patterns. A test works best when your control and variant pages are close cousins, not distant relatives who only meet at Thanksgiving.

If you test category pages, do not mix in blog posts. If you test local landing pages, do not sneak a few service pages into the bucket. Similarity gives your results a fighting chance.

3. Change one core variable at a time

Single-variable testing is not always perfect in SEO, but it is still the cleanest path to learning. Change the title format, or the internal linking block, or the hero copy, or the placement of crawlable text. Not all of it at once. When one test answers one question, you build knowledge quickly. When one test tries to answer six questions, you learn almost nothing.

4. Decide which metrics actually matter

For most SEO tests, the core metrics come from Google Search Console: clicks, impressions, average CTR, and average position. Those metrics help you see whether visibility changed, whether more users clicked, and whether the pages gained or lost traction. If the test touches indexing or crawlability, you should also check URL inspection results, page indexing signals, and crawl-related diagnostics.

At the business level, you may also care about organic sessions, conversions, revenue, leads, or assisted conversions. Just do not confuse business outcomes with page-level SEO signals. A page can win more impressions and still lose conversions if the messaging gets weird. That is why mature teams look at both search performance and business performance together.

5. Establish a baseline before you touch anything

Before launching the test, document baseline performance for both control and variant groups. Export your page sets. Record clicks, impressions, CTR, average position, and any key conversion metrics. Note the date, the pages involved, and the exact change you plan to make.

This step is boring, which is precisely why it saves careers.

6. Roll out carefully and QA like a maniac

Even brilliant test ideas get wrecked by sloppy deployment. A title test can accidentally break templates. A content test can inject duplicate headings. An internal linking test can create crawl traps or weird anchor text. A JavaScript change can hide the very content you intended to expose.

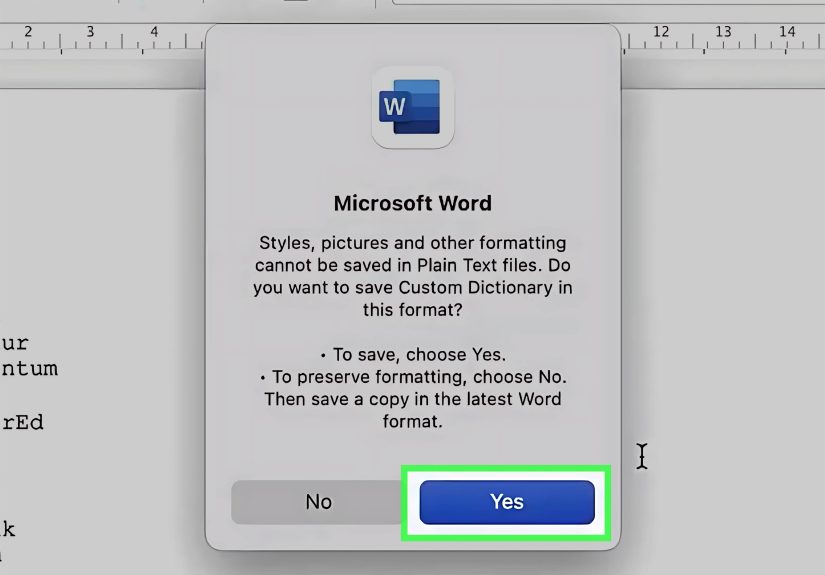

QA the pages before and after launch. Check rendered HTML. Confirm canonicals. Validate redirects. Make sure the pages remain crawlable. Inspect a sample of URLs in Search Console. If the test uses alternate URLs for experimentation, follow search-friendly testing practices such as using canonicals correctly, avoiding cloaking, using temporary redirects when appropriate, and not leaving experiments running forever.

7. Let the test run long enough to gather signal

SEO is not instant coffee. Search engines need time to crawl, process, and reflect changes. Users need time to search, see, and click. Your market also needs time to stop doing weird market things.

How long is long enough depends on the size of the page set and the volume of impressions, but the principle is simple: do not call a winner after three days because a graph twitched upward and made you emotional.

8. Analyze the outcome honestly

There are only three respectable outcomes: positive, negative, or inconclusive. Positive means roll it out more broadly. Negative means reverse it or rethink it. Inconclusive means you learned something valuable: either the change did not matter enough, the sample was too weak, or external noise drowned out the signal.

Inconclusive does not mean “let’s pretend it worked because the VP likes the idea.” That move belongs in the comedy section, not the SEO strategy deck.

What You Should Test First

If you are new to SEO testing, start with changes that are meaningful, measurable, and relatively reversible. These are usually the best first candidates:

Title tag formats

Test order, length, modifiers, branding placement, and whether the main query appears earlier. Titles influence how search engines understand pages and how users respond in the results. Small wording changes can affect CTR more than many teams expect.

Internal linking modules

Test whether adding contextual internal links, improving anchor text, or surfacing deeper pages more prominently helps discovery and performance. Internal links remain one of the most practical levers for helping search engines and users navigate your site.

On-page copy placement

Test whether moving key explanatory copy higher on the page, clarifying headings, or expanding thin content improves visibility. This is especially useful on category, service, and template-heavy pages where intent needs clearer reinforcement.

Rendered HTML versus hidden or deferred content

If important content is buried inside JavaScript-heavy modules, testing a cleaner HTML presentation can be revealing. Search engines can process JavaScript, but cleaner, accessible, directly rendered content often makes your SEO life easier.

Structured data implementation

Structured data can help search engines understand page content and may support enhanced results in some cases. But it is not fairy dust. Test it thoughtfully, validate it carefully, and do not assume markup alone will rescue a weak page.

Common SEO Testing Mistakes to Avoid

- Testing pages with different intent in the same group

- Changing multiple major elements at once

- Ignoring seasonality, promotions, or product availability shifts

- Declaring success based on a tiny CTR bump with no traffic scale

- Leaving test URLs, redirects, or canonical signals messy after the experiment

- Failing to separate branded performance from non-branded opportunity

- Rolling out a sitewide change before the test is truly understood

One more mistake deserves special mention: testing for rankings instead of usefulness. Search engines increasingly reward content that is helpful, reliable, and designed for people first. If your “winning” test only makes a page more mechanical, repetitive, or manipulative, it may create short-term signal and long-term weakness. Do not optimize yourself into a personality disorder.

How to Present Test Results to Stakeholders

SEO testing is not just about learning. It is also about persuasion. When you present results, keep it simple:

- State the hypothesis

- Show the page groups

- Describe the exact change

- List the time frame

- Compare control versus variant performance

- Call the result positive, negative, or inconclusive

- Recommend next action

Executives do not want a 47-tab spreadsheet and a speech about “SERP volatility.” They want to know what changed, what happened, what it means, and what comes next. Give them that, and suddenly SEO sounds less like astrology and more like a growth function.

The Long-Term Payoff of Building a Testing Culture

When a company starts running SEO tests regularly, something important happens. The team becomes less reactive. Instead of chasing every rumor, they build an internal evidence base. They know what works on their templates. They know which page types respond to title work, which ones benefit from more copy, and which technical changes deserve engineering time.

That creates a compounding advantage. Over time, your SEO program becomes faster, calmer, and smarter. Ideas get prioritized better. Risk goes down. Wins become easier to defend. Failures become cheaper to absorb. And the entire organization stops treating SEO like a mysterious cave full of spreadsheets and stressed-out people.

Experiences from the Real World of SEO Testing

One of the most common experiences among SEO teams is discovering that their “sure thing” was not a sure thing at all. A team may spend weeks debating whether a title tag rewrite will transform performance, only to find that the change barely moves CTR. Meanwhile, a much less glamorous test, like improving internal links to underperforming category pages, quietly produces the stronger gain. That kind of result can be humbling, but it is also incredibly useful. It reminds teams that search performance is usually shaped by the interaction of relevance, crawlability, clarity, and competition, not by one magic trick.

Another familiar experience is how often testing exposes communication problems inside organizations. SEO teams may know exactly what they want to test, but getting the change live often requires coordination with developers, product managers, designers, analysts, and content editors. Suddenly, the SEO test is not just a search project. It becomes a workflow project. In many companies, the first real win from testing is not even the traffic lift. It is the creation of a cleaner process for experimentation, QA, deployment, and reporting.

There is also the emotional side of testing, which rarely gets discussed enough. Positive tests feel amazing because they validate effort and build momentum. Negative tests, however, can feel oddly personal. Teams sometimes become attached to ideas they have championed for months. When the data says the idea failed, the mature response is not defensiveness. It is gratitude. A failed test that prevents a bad sitewide rollout is a success in disguise. It may not win applause in a meeting, but it protects the business from self-inflicted damage.

Many practitioners also describe the strange but valuable experience of running tests that appear neutral at first, then become powerful once segmented properly. Maybe the overall result looks flat, but branded queries improved while non-branded queries declined. Maybe mobile performance improved while desktop stayed unchanged. Maybe mid-tier pages gained impressions while top pages held steady. These moments teach an important lesson: the first reading of a test is rarely the final story. Careful segmentation often reveals where the real insight is hiding.

Perhaps the most rewarding experience of all is watching a company shift from opinion-driven SEO to evidence-driven SEO. The conversations change. Instead of “I saw a post saying we should do this,” the room starts asking, “What did our last test show?” That is a major cultural upgrade. It reduces noise, improves prioritization, and gives SEO a more credible voice in strategic planning. Over time, testing builds confidence not because every experiment wins, but because every experiment teaches. And in a discipline as noisy as SEO, a team that learns faster than its competitors is already halfway to winning.

Conclusion

Running your own SEO tests is not optional if you want a mature, reliable search strategy. Best practices are helpful starting points, but they are not universal laws. Your site, your templates, your audience, and your competitive landscape create a unique testing environment. The only way to know what truly works is to test carefully, measure honestly, and learn continuously.

So yes, read the guides. Watch the conference talks. Steal smart ideas shamelessly. But before you roll out a change across thousands of pages because someone on the internet sounded confident, run the test. Your traffic, your team, and your future self will thank you.