Table of Contents >> Show >> Hide

- What Gerrymandering Actually Does (Beyond Making Weird Shapes)

- The Legal Backdrop: Why the Math Matters More Than Ever

- The First Wave: Simple Metrics That Put Numbers on “Packing and Cracking”

- The Second Wave: Ensembles and Simulations (a.k.a. “Show Me the Alternative Universes”)

- Why “One Metric to Rule Them All” Usually Backfires

- Math in the Real World: How Experts Plug Into Redistricting

- But Can Math “Solve” Gerrymandering?

- Where Reform Meets Reality: Rules, Processes, and Incentives

- Experiences Related to “Math Experts Combing Their Powers to Take on Gerrymandering”

- Experience 1: The Workshop Where Everyone Learns to Speak Human

- Experience 2: The Moment the Computer Says, “That Map Is Weird”

- Experience 3: The Community Map That Beats the “Experts”

- Experience 4: The “Metric Disagreement” Argument (and How Teams Handle It)

- Experience 5: The “Build Trust or Lose the Room” Lesson

- Conclusion: The Map Isn’t Destiny When the Math Is Public

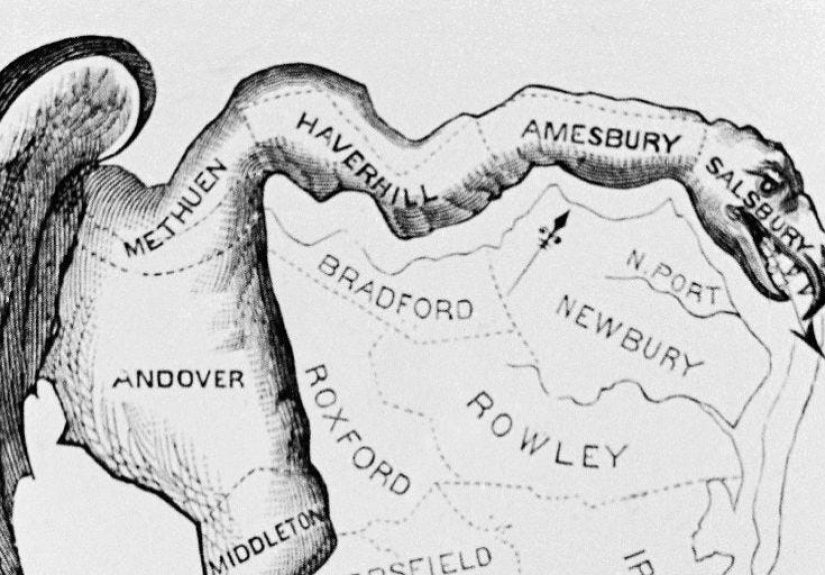

If you’ve ever looked at an election map and thought, “That district has the shape of a spilled latte,” congratulationsyou’ve met the

aesthetic side of gerrymandering. But the real issue isn’t that some districts look like modern art. It’s that district lines can be drawn

to tilt representation so that the seats a party wins don’t match the votes it earns. That’s where mathematicians, statisticians, computer

scientists, and data-minded legal scholars have been quietly assembling a superhero teamminus the capes, plus a lot of probability.

Over the past decade, a wave of quantitative tools has changed the anti-gerrymandering conversation from “that looks fishy” to “here’s the

evidence.” New metrics, simulation methods, and open-source software now help answer a deceptively hard question: is a map unfair because of

intentional line-drawing, or because voters are naturally clustered in ways that make representation lopsided? The math community’s answer is

refreshingly honest: no single number can settle every case. But a carefully designed toolkit can make manipulation much harder to hide.

What Gerrymandering Actually Does (Beyond Making Weird Shapes)

Partisan gerrymandering is the practice of drawing districts to amplify one party’s power beyond what its statewide vote share would normally

produce. Two classic moves drive most partisan maps:

- Packing: concentrating the opposing party’s voters into a small number of districts, letting them “win big” in a few places.

- Cracking: splitting the opposing party’s voters across many districts so they’re consistently outvoted.

Those two tactics can convert a modest statewide advantage into a big seat advantageor even allow a party with fewer votes statewide to win

more seats. The tricky part is that districting can also be constrained by legitimate criteria: equal population, minority protections,

contiguity, compactness, and keeping communities together. So when someone says, “This map is unfair,” the next question is:

unfair compared to what set of realistic alternatives?

The Legal Backdrop: Why the Math Matters More Than Ever

In the United States, redistricting must comply with federal requirements like near-equal population for congressional districts and protections

for minority voters under the Voting Rights Act, while many additional rules come from state constitutions and statutes. States often include

criteria such as contiguity, compactness, respecting political boundaries, and preserving “communities of interest” (groups likely to share

common policy concerns).

The legal landscape also affects where challenges can succeed. Federal courts can hear racial gerrymandering and Voting Rights Act cases, but

in 2019 the U.S. Supreme Court held that claims of partisan gerrymandering present political questions beyond the reach of federal courts.

That didn’t end the fightit shifted much of it to state courts, state constitutions, ballot initiatives, and structural reforms like independent

commissions. This shift makes objective, transparent evidence especially valuable: math can clarify whether a plan is a statistical outlier

compared with maps that follow the same rules.

The First Wave: Simple Metrics That Put Numbers on “Packing and Cracking”

Math-minded reformers started by asking: can we measure partisan advantage the way we measure a feverimperfectly, but enough to know something’s

wrong? Several widely used metrics emerged.

1) The Efficiency Gap: Counting “Wasted Votes”

The efficiency gap is built on a straightforward idea: in each district, votes are “wasted” if they’re cast for a losing candidate, or if

they exceed what the winning candidate needed to win. If one party wastes far more votes than the other across a statewide plan, the map may be

systematically converting votes into seats more efficiently for one sideoften due to packing and cracking.

Analysts like the efficiency gap because it is intuitive and can be computed with readily available election returns. Journalists and researchers

have used it to evaluate partisan advantage across states, and legal scholars have discussed thresholds that might flag extreme cases. But the

efficiency gap also has limits: it can be affected by wave elections, uncontested races, and the natural geography of voters.

2) Partisan Symmetry: “If the Parties Swapped Votes, Would the Seats Swap Too?”

Another family of tools focuses on partisan symmetry. The core fairness intuition is: if Party A and Party B each received the same vote share,

they should have similar expected seat shares. Symmetry metrics include partisan bias and measures based on seat–vote curves. A related

workhorse is the mean–median difference, which looks for skew in district-level vote distributions: if the mean vote share is

meaningfully different from the median, that can hint at one party benefiting from the way voters are distributed across districts.

These tools are useful because they aim at the heart of partisan gerrymandering: asymmetric treatment of parties. But they are not magic.

Researchers have documented scenarios where symmetry metrics can mislead if the political geography is unusual or if only certain vote–seat

outcomes are realistically achievable in a state.

3) Compactness Scores: Helpful, But Not a Lie Detector

Compactness metrics (like Polsby–Popper or Reock) measure how “squiggly” a district is. They’re tempting because they align with human intuition:

a district shaped like a pretzel seems suspicious. But compactness can’t fully diagnose partisan intent. A perfectly compact district can still

be a gerrymander if it slices communities strategically, and some non-compact districts are unavoidable due to geography or political boundaries.

Compactness is best used as a supporting indicatorone piece of the puzzle.

The Second Wave: Ensembles and Simulations (a.k.a. “Show Me the Alternative Universes”)

Here’s the big leap the math community helped popularize: instead of judging a map in isolation, generate a large collection of alternative maps

that follow the same rulesthen see whether the enacted plan is a statistical outlier.

This approach tackles the hardest objection in gerrymandering debates: “Maybe this outcome is just geography.” If you can generate thousands (or

millions) of legally compliant maps using the same criteriaequal population, contiguity, keeping counties intact where required, and so onyou

get a baseline distribution of outcomes. If the enacted map sits in the extreme tail of that distribution, you have stronger evidence that the

plan is unusual in ways that can’t be explained by constraints alone.

How Ensembles Are Built: Markov Chains and a Lot of Patience

A common method uses Markov chain Monte Carlo (MCMC) sampling. In plain English: start with one valid map, make a small change that preserves

the rules, accept that new map, and repeatproducing a “walk” through the space of compliant district plans. Over time, this can generate a broad

sample of plausible maps. Open-source tooling has made this approach more accessible, allowing researchers and civic groups to build ensembles and

compare outcomes transparently.

Why does this matter? Because it reframes the debate. Instead of arguing over whether a single metric is “the” standard, the ensemble method asks:

“Given your rules, your population, and your geography, what range of outcomes is typical?” That’s a question math is very good at answering.

Outlier Evidence: When a Map Is “Unusually Favorable”

Suppose a state’s voting behavior is roughly 50–50, but an enacted plan consistently produces a durable 70–30 seat split for one party under many

election scenarios. Maybe that’s geography. Or maybe it’s design. Ensemble analysis can test that by showing whether most compliant maps cluster

around a more proportional outcome while the enacted map lands far away from the crowd.

Researchers often evaluate multiple election datasets (e.g., several recent statewide races) because any single election can be noisy. They may

also test sensitivity: do results persist under different modeling assumptions, or do they vanish if you tweak one parameter? The goal is not to

claim omniscienceit’s to build robust evidence.

Why “One Metric to Rule Them All” Usually Backfires

If you’ve ever tried to rank your friends using one number, you already understand the problem. (Friendship is multidimensional. Also, your

friends will find out.) Gerrymandering is similarly multidimensional.

Scholars have cautioned against relying on a single metric because different measures can be “gamed” or can disagree depending on state geography,

electoral volatility, and how districts translate votes into seats. Some recent work demonstrates that even maps with extreme partisan outcomes can

sometimes score within “reasonable” bounds across multiple metricsespecially if the map exploits the blind spots of any one formula.

That’s why modern expert practice tends to use a portfolio approach:

- Use multiple partisan metrics (efficiency gap, symmetry measures, seat–vote analyses).

- Check district shape and boundary splits (compactness, county/municipal splits).

- Evaluate communities of interest and compliance with state criteria.

- Run ensemble simulations to test whether the enacted plan is an outlier under the same constraints.

The most persuasive analyses typically show convergence: different tools, different datasets, and different assumptions all point toward the same

conclusion. That convergence is what makes the math feel less like a trick and more like a thermometer reading.

Math in the Real World: How Experts Plug Into Redistricting

The anti-gerrymandering math effort isn’t just academics writing papers that collect dust. It shows up in several practical arenas:

1) Expert Testimony and Litigation Support

In state-level cases and Voting Rights Act litigation, courts often face competing narratives. Quantitative experts can explain whether a plan’s

outcomes are consistent with neutral criteria or whether they appear engineered. Effective testimony usually translates technical findings into

plain language: “Here’s what’s typical. Here’s what this plan does. Here’s how rare that is.”

2) Public Comment and Commission Submissions

Many states now solicit public input or rely on commissions. That creates opportunities for mathematicians and data scientists to submit analysis,

draft alternative maps, and help communities understand tradeoffs. The best contributions don’t just criticizethey show feasible improvements that

still satisfy the rules.

3) Open-Source Tools and Civic Tech

One of the quiet revolutions has been the growth of open-source redistricting software and educational projects that make the math legible.

Instead of “trust us,” researchers increasingly provide code, methods, and replicable workflows. That’s not just good scienceit’s good democracy.

But Can Math “Solve” Gerrymandering?

Math can’t prevent a legislature from trying to game the system. And it can’t replace the civic values embedded in redistricting criteria:

preserving communities, ensuring minority representation, respecting local boundaries, and maintaining accountability. What math can do is reduce

plausible deniability.

Think of it like this: if redistricting used to be a dimly lit room where someone could rearrange the furniture and swear nothing changed,

quantitative analysis turns on the overhead lights. You can still move the couchbut everyone can see where it went.

Where Reform Meets Reality: Rules, Processes, and Incentives

Even the best math works best when paired with strong process design. Reformers often focus on who draws the mapslegislatures vs. commissions.

But the rules and constraints matter too: clear criteria, transparency requirements, opportunities for public input, and enforceable standards.

Independent redistricting commissions can reduce direct conflicts of interest, but commissions still need strong rules and data transparency to

earn trust.

State-by-state differences also matter. Some places emphasize communities of interest; others prioritize minimizing splits of counties or cities.

Some states have robust public mapping processes; others operate behind closed doors. The math community’s contribution is flexible: build methods

that can adapt to each state’s legal and geographic realities rather than pretending one national standard fits everyone perfectly.

Experiences Related to “Math Experts Combing Their Powers to Take on Gerrymandering”

The most interesting “on-the-ground” experiences in this space tend to look less like a Hollywood courtroom drama and more like a group project

where everyone secretly cares a lot. Here are a few common, real-world scenarios that illustrate how math experts combine forceswithout pretending

there’s one perfect answer hiding in a spreadsheet.

Experience 1: The Workshop Where Everyone Learns to Speak Human

A frequent early challenge is communication. A statistician may feel perfectly comfortable saying a map is in the “0.5th percentile of an ensemble

distribution,” but a community member wants to know: “Does that mean our neighborhood got split on purpose?” In workshops and trainings, technical

experts practice translating results into everyday language, and community advocates explain what “community of interest” means in lived terms:

school zones, transit corridors, shared water systems, local economies, language access, and cultural ties. The result is a two-way upgrade:

mathematicians learn which questions matter most, and advocates gain tools to back up their concerns with evidence.

Experience 2: The Moment the Computer Says, “That Map Is Weird”

Ensemble analysis often produces an “aha” moment: you generate thousands of compliant maps and watch outcomes clusterthen the enacted map sits

alone, far away, like it brought potato salad to a pizza party. That moment can be powerful, but responsible teams treat it as the start of the

investigation, not the end. They ask: did we model the state’s legal criteria correctly? Did we use appropriate election data? Are there geographic

constraints (rivers, mountains, sparse rural areas) that naturally shape outcomes? Good practice means stress-testing the conclusion until it’s

either sturdieror honestly less certain.

Experience 3: The Community Map That Beats the “Experts”

Another recurring experience is humility. Technical teams sometimes draft maps that look clean on paper but ignore community realities. Then local

residents submit an alternative that better respects neighborhoods, keeps school districts intact, and still meets population equalityoften with

competitive outcomes that don’t lock in one party. This is where math “combines powers” with civic knowledge: analysts can quantify tradeoffs

(compactness vs. county splits vs. partisan symmetry), while communities can define what deserves to stay together. When those inputs align, the

result is usually stronger than either group working alone.

Experience 4: The “Metric Disagreement” Argument (and How Teams Handle It)

In practice, teams often see metrics disagree. The efficiency gap might flag a map as extreme, while a symmetry metric looks less dramatic, or vice

versa. Instead of picking a favorite metric like it’s a fantasy football draft, experienced analysts treat disagreement as diagnostic information.

Why do the measures differ? Is it a turnout-driven effect? Are there many lopsided districts? Is there an urban concentration that naturally

produces certain patterns? Teams document these dynamics so decision-makers don’t get trapped in a one-number debate.

Experience 5: The “Build Trust or Lose the Room” Lesson

The final experience is about credibility. Because redistricting is politically charged, technical work must be transparent and reproducible.

The most trusted efforts openly share assumptions, data sources, and limitations. They present results with uncertainty where uncertainty is

warranted. And they avoid the temptation to declare math a substitute for values. In other words: the most effective “math experts combining

their powers” behave less like wizards and more like careful auditorsshowing their work, inviting scrutiny, and helping the public see the

difference between unavoidable tradeoffs and engineered advantage.

Conclusion: The Map Isn’t Destiny When the Math Is Public

Gerrymandering thrives in ambiguity: complex rules, messy geography, and just enough plausible deniability to make unfair maps sound inevitable.

Math experts are shrinking that ambiguity. By combining clear metrics with ensemble simulations, open-source tools, and plain-language reporting,

they’re turning redistricting into something closer to a testable process than a backroom art project.

No method will eliminate political incentives. But when communities, commissions, courts, and journalists can evaluate maps against transparent

baselinesand when outlier plans are easier to identifyline drawers have a harder time pretending that a “coincidental” advantage just happened

to appear. In the long run, that’s the real superpower: making fairness measurable enough that it becomes harder to ignore.