Table of Contents >> Show >> Hide

- The Aftermath Wasn’t One ProblemIt Was a Whole Playlist

- Monitoring Networks Grew Up: From “Emergency Detection” to “Always-On Baselines”

- Health Science Adapted: Dose Reconstruction, Risk Models, and Hard Conversations

- Science Went UndergroundLiterallyand Had to Learn a New Planet

- Seismology’s Glow-Up: Treaty Verification Made Earth Science Better

- Satellites Built to Catch Cheaters Ended Up Finding Exploding Stars

- Cleanup, Stewardship, and the Long Game of Environmental Science

- How the Aftermath Changed the Culture of Science

- Conclusion: Adaptation Meant Turning Spectacle into Stewardship

- Experiences from the Field: What It’s Like to Study the Aftermath

Picture a mid-century scientist squinting at a paper strip chart while a Geiger counter chatters like it just drank three cups of coffee. Outside, the world is trying to

“move on” from the Cold War’s most dramatic science fair projects: nuclear weapons tests. Inside, researchers are doing the less photogenic workmeasuring fallout,

tracking health effects, building monitoring networks, and figuring out how to study (and clean up) a legacy that doesn’t respect state lines, property fences, or political

slogans.

The big twist is that “the aftermath” didn’t end when the last tower shot fired or when the headlines faded. It became a long-running scientific problempart public health,

part environmental monitoring, part geophysics, part data science, and part diplomacy. The Cold War created new kinds of hazards. The post–Cold War era forced science to

become fluent in detection, uncertainty, and accountability.

The Aftermath Wasn’t One ProblemIt Was a Whole Playlist

Nuclear testing left behind more than iconic photos. It produced radioactive byproducts that behaved differently depending on the isotope, the test type (atmospheric vs.

underground), the weather, and the way people lived. Some radionuclides decayed quickly; others persisted long enough to become a multi-decade homework assignment for

scientists and regulators.

Fallout science had to get specificfast

In the 1950s and early 1960s, above-ground tests could send radioactive materials into the atmosphere and across large distances. That forced researchers to shift from

“Did anything happen?” to “What exactly happened, where, how much, and to whom?” One especially consequential isotope was iodine-131 (I-131), which can concentrate in the

thyroid. When it shows up in the environment, your risk picture suddenly depends on age, diet, milk supply chains, and geographynot just how far you live from a test site.

The scientific response became a mix of radiochemistry (what’s in the sample), exposure science (how it reaches people), and epidemiology (what it does to health). This is

where the story turns from mushroom clouds to spreadsheetsbecause if you want to understand exposure decades later, you need models, historical monitoring data, and a

willingness to admit what you can’t know perfectly.

Monitoring Networks Grew Up: From “Emergency Detection” to “Always-On Baselines”

One of the most durable adaptations was the creationand continual upgradingof environmental radiation monitoring. Early systems were built to detect radioactive releases

quickly. Over time, they evolved into a baseline-tracking machine: measuring what “normal” looks like so that “not normal” can be spotted fast.

RadNet and the art of knowing what background looks like

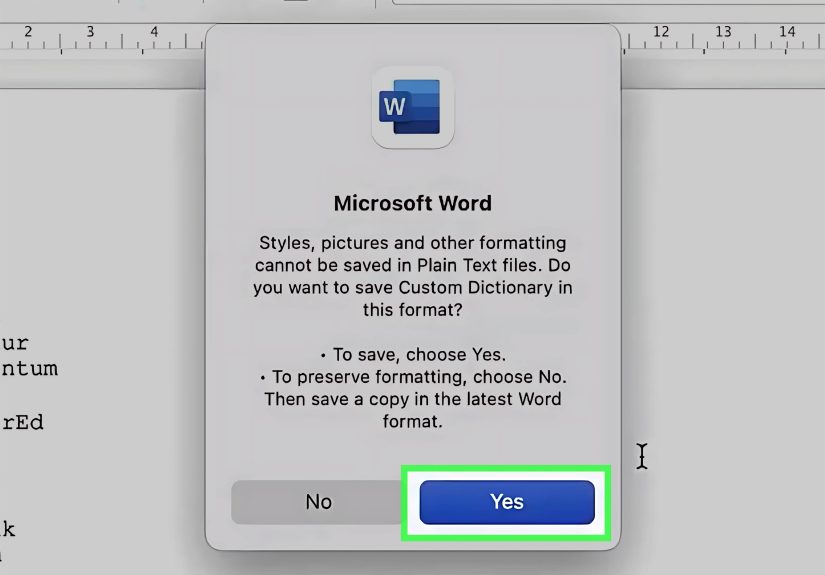

In the United States, EPA’s RadNet (and its predecessors) became a national framework for monitoring environmental radiation. The timeline matters: these programs developed

through decades of nuclear testing, then carried forward into an era where concerns included accidents, overseas events, and long-term trends. That continuity is a scientific

superpower. If you don’t have historical data, you can’t confidently say whether a measurement is a blip, a trend, or your instrument having a bad day.

This “baseline mindset” changed environmental science. Researchers learned to build networks that collect comparable samples over long periods, maintain calibration

standards, and publish data that can be interpreted by people outside the original program. In plain English: the science had to become legible, not just correct.

Detection got more nuanced than a single number

Another lesson: you can’t interpret radiation measurements without context. Natural background radiation varies by geology and altitude. Radon exists. Medical uses exist.

Even rainfall patterns matter. Post-testing science became more careful about explaining uncertainty, detection limits, and what a measurement does not mean. That’s

a quiet revolutionbecause public trust often rises or falls on whether scientists can communicate “here’s what we know” without implying “here’s what you should panic

about.”

Health Science Adapted: Dose Reconstruction, Risk Models, and Hard Conversations

The aftermath forced a shift from immediate incident response to long-latency questions. Many radiation-related health outcomes (including certain cancers) can take years

to develop. That means the key scientific question often becomes: “What was the dose?”especially when you’re trying to estimate exposures that happened before modern

digital monitoring and before everyone carried a camera in their pocket.

The rise of dose reconstruction

A major example is the work around I-131 exposure from Nevada atmospheric tests. Researchers had to combine historical test information, meteorology, deposition patterns,

food pathways (especially milk), and demographics to estimate thyroid doses and associated risks. This kind of research isn’t just number crunchingit’s forensic science

with weather maps.

Dose reconstruction also changed the culture of scientific accountability. When you produce estimates that may affect medical decisions, policy, and compensation programs,

your assumptions can’t be hand-wavy. They must be explicit, testable, and open to critique. That’s one reason independent scientific review became a bigger deal in the

post-testing era.

From “Should we screen?” to “How do we talk about risk?”

Scientific adaptation wasn’t only technicalit was ethical and communicative. When studies suggest increased risk in certain populations, science has to answer two

questions at once:

- Scientific: What is the magnitude of risk, and how confident are we?

- Practical: What should people do with that information?

Public health responses had to balance the benefits and downsides of screening, the anxiety created by uncertain risk, and the reality that “population-level risk” doesn’t

map neatly onto a single individual. Post–Cold War science increasingly emphasized risk communication that is clear, specific, and honest about uncertaintybecause “trust

us” is not a method.

Science Went UndergroundLiterallyand Had to Learn a New Planet

As nuclear testing moved away from the atmosphere and into underground environments, the scientific problems changed shape. Underground tests reduced widespread airborne

fallout compared with above-ground detonations, but they created complex geologic and engineering questions: containment, venting, subsidence craters, groundwater pathways,

and long-term stewardship.

The Nevada National Security Site as a case study in complexity

The Nevada National Security Site (historically the Nevada Test Site) hosted a large number of U.S. tests over decadesboth atmospheric and underground. That legacy

triggered long-term scientific work on soil and groundwater monitoring, site characterization, and remediation planning. Even when testing stopped, science didn’t.

Stewardship became the new normal: measure, model, mitigate, and keep measuring.

“Peaceful nuclear explosions” taught uncomfortable lessons

Some experiments explored non-military applications. The Plowshare Program’s cratering tests (including the Sedan event) produced dramatic earthmovingand also produced a

public, visible reminder that “just because you can” isn’t the same as “this is a good idea.” Scientifically, these tests generated data on crater formation and ground

shock. Politically and socially, they helped push science toward a more realistic accounting of environmental cost.

Seismology’s Glow-Up: Treaty Verification Made Earth Science Better

One of the most fascinating adaptations is how nuclear-test detection drove advances in seismology. If nations were going to limit or ban tests, they needed ways to verify

compliance. That meant distinguishing underground explosions from earthquakesa problem that sounds simple until you realize the Earth is noisy, complicated, and not

obligated to cooperate.

Networks, standards, and a global view of the ground beneath us

U.S.-led programs helped expand seismographic monitoring and research. Work associated with treaty verification contributed to the development and deployment of standardized

seismograph networks and methods for analyzing seismic signals. The scientific payoff extended beyond nuclear detection: better instrumentation and broader networks improved

earthquake catalogs and deep-Earth research. In other words, the Cold War funded an awkward but real gift to geophysics: more data, more coverage, better tools.

And here’s where science got humbledin a useful way. Detection isn’t just “hear something” but “classify something.” That pushed researchers to build statistical methods,

signal processing techniques, and cross-checking strategies (seismic + atmospheric + satellite data) that resemble modern sensor fusion. Today, many fieldsearthquake early

warning, remote sensing, even some climate monitoringshare that DNA.

Satellites Built to Catch Cheaters Ended Up Finding Exploding Stars

If you want proof that science adapts in unpredictable ways, look upliterally. Satellites launched to detect nuclear detonations in space contributed to one of

astrophysics’ great discoveries: gamma-ray bursts. The instruments were designed for national security monitoring, but the data hinted at something not from Earth at all.

Eventually, researchers identified bursts of cosmic originan accidental discovery that reshaped high-energy astronomy.

This is adaptation at its best: the same detection infrastructure built for monitoring nuclear tests helped open a new window on the universe. It’s also a reminder that

“aftermath science” doesn’t only mean cleanup and riskit can include unexpected spin-offs, where tools created for one purpose become foundational for another.

Cleanup, Stewardship, and the Long Game of Environmental Science

When nuclear testing ended, the story didn’t conclude with a satisfying end-credit song. It shifted into long-term site management: contaminated soils, groundwater concerns,

legacy facilities, and disposal decisions. This demanded a different kind of scienceslow, methodical, and stubbornly committed to measurement over decades.

From experiments to engineered responsibilities

Cleanup and stewardship work at former nuclear-related sites involves characterizing contaminants, understanding how they move through soils and water, and designing

controls that remain effective far beyond a normal funding cycle. That’s not just chemistry; it’s systems engineering plus environmental monitoring, plus regulatory

coordination, plus public communication.

Federal programs emphasize monitoring and maintenance as continuing obligations, not one-time fixes. Reports and oversight reviews have repeatedly highlighted how complex

long-term cleanup can be, including challenges in planning, cost, schedules, and technical uncertainty. Post–Cold War science learned to live with the reality that some

problems don’t have a “final” solutiononly better management and lower risk.

Why “measure forever” is not as depressing as it sounds

Long-term monitoring can feel like science stuck on repeat, but it’s also a triumph of method. It means you can detect changes early, test whether controls are working,

and build an evidence trail strong enough to survive leadership changes, budget cycles, and public skepticism. In a world that loves quick wins, this is the scientific

equivalent of flossing: boring, essential, and deeply underrated.

How the Aftermath Changed the Culture of Science

Beyond instruments and models, the aftermath pressured science to evolve socially. Communities affected by fallout and testing demanded answers, transparency, and agency.

That altered how research questions were framed and how results were communicated. It also reinforced norms that are now widely expected: independent review, clearer

uncertainty statements, and open access to methods and data where possible.

The Cold War era often treated information as strategic. The post–Cold War era increasingly treated information as a public health necessity. That’s a big cultural

adaptation. It didn’t happen overnight, and it wasn’t perfect, but the direction matters: science moved toward being not only a producer of knowledge, but a public-facing

translator of risk.

And yesscience got better at admitting what it can’t do. You can’t rerun the 1950s with modern sensors. You can’t reconstruct every exposure with pinpoint precision.

But you can build best estimates, quantify uncertainty, improve methods, and keep monitoring. The “aftermath mindset” is essentially scientific adulthood:

responsible, transparent, and allergic to magical thinking.

Conclusion: Adaptation Meant Turning Spectacle into Stewardship

Cold War nuclear tests created a scientific challenge that outlived the politics that produced it. In response, science adapted in three big ways:

it built nationwide and global monitoring systems, developed sophisticated health-risk and dose-reconstruction methods, and learned long-term environmental stewardship.

Along the way, it improved seismology, helped spark discoveries in astronomy, and pushed research culture toward transparency and public accountability.

The lesson isn’t that science “fixed” the aftermath. It’s that science learned to live with it responsiblymeasuring what can be measured, modeling what must be

inferred, and communicating what people deserve to know. That’s not flashy. But it’s the kind of progress that prevents future harm, informs smarter policy, and (quietly)

makes the world safer than the last time someone thought a mushroom cloud was a reasonable way to gather data.

Experiences from the Field: What It’s Like to Study the Aftermath

If you ask researchers what working on nuclear-test aftermath feels like, you often hear the same theme: it’s a science of patience. Field teams don’t walk into a

Hollywood crater with dramatic music swelling. They walk into ordinary-looking placesdry washes, fence lines, monitoring wells, dusty equipment shedswhere the most

important tool is usually a clipboard (or, these days, a rugged tablet that still somehow dies at the worst time). The “experience” is less about spectacle and more about

consistency: collect the sample the same way every time, log the metadata correctly, and treat every measurement like it may someday matter in a courtroom, a clinic, or a

community meeting.

In environmental monitoring, the emotional rhythm can be surprising. A day with “nothing detected above baseline” is technically a success, but it doesn’t always feel like

oneespecially to newcomers who secretly hoped for a dramatic signal. Veterans learn a different kind of satisfaction: the calm confidence that your instruments are stable,

your controls are clean, and your dataset is getting stronger. Over years, that dataset becomes the story. It’s how you spot small changes, confirm that remediation is

working, or catch an anomaly early enough to investigate before rumors do the job for you.

Dose reconstruction work has its own texture. It feels like historical detective work mixed with advanced math. Researchers might spend a morning reading old monitoring

summaries and weather records, then spend the afternoon arguing (politely, with charts) about uncertainty ranges and modeling assumptions. The weird part is that the most

human details can matter: how milk was distributed, what kids typically ate in a region, which season a test occurred, and how families stored food. That can make the work

feel intimate in an unexpected way. You’re not just modeling physics; you’re modeling real lives that happened before anyone asked for permission to run the experiment.

Then there’s the experience of communicating results. Scientists quickly learn that the public doesn’t only want numbersthey want meaning. Community meetings can feel like

the hardest part of the job, because people arrive with grief, anger, confusion, or fatigue from decades of not feeling heard. The best communicators don’t “win” the room

with technical brilliance. They earn trust by being specific, avoiding jargon, and saying the sentences scientists are trained to dodge: “Here’s what we know. Here’s what

we don’t. Here’s what we’re doing next.” It can be emotionally draining work, but it also sharpens the science. When you have to explain your assumptions to people whose

families lived under the fallout path, you become less tolerant of sloppy thinking.

Finally, there’s a practical experience that almost everyone in the field recognizes: the quiet pride of turning legacy into learning. A monitoring station that keeps

running year after year is a kind of promise kept. A revised sampling protocol that reduces errors is progress you can’t photograph. A model that better represents

uncertainty isn’t dramaticbut it’s honest, and honesty is the point. The aftermath of Cold War nuclear testing pushed science to become more interdisciplinary, more

transparent, and more aware that the audience isn’t just other scientists. In this work, the real “results” aren’t only papers. They’re better systems, clearer risks, and

a world that is slightly less likely to repeat the same mistakes with a bigger budget.