Table of Contents >> Show >> Hide

- First, a quick reality check

- How to Tell if a Video Is AI-Generated: 11 Clear Signs

- 1. The lip-sync feels just a little too wrong

- 2. The face edges look pasted on

- 3. The teeth, tongue, or inside of the mouth turn into mush

- 4. The eyes blink strangely, stare strangely, or emote strangely

- 5. The lighting and shadows do not agree with each other

- 6. Reflections in glasses, eyes, mirrors, or shiny surfaces make no sense

- 7. Text, logos, signs, or tiny design details come out garbled

- 8. Background objects flicker, duplicate, bend, or disappear

- 9. Movement physics feel slippery

- 10. There is no trustworthy provenance, watermark, or origin trail

- 11. The context smells wrong, even if the pixels look fine

- What works better than staring at pixels for 20 minutes?

- Can you always tell if a video is AI-generated?

- Why this matters more than ever

- Experience-Based Takeaways: What Spotting AI Video Feels Like in Real Life

- Conclusion

- SEO Tags

AI video has officially moved past the “ha, that hand has six fingers” era. These days, synthetic clips can look polished enough to fool tired brains, busy thumbs, and that one cousin who shares everything in the family group chat like they are working a full-time shift for chaos. So if you want to know how to tell if a video is AI-generated, you need more than one trick. You need a method.

The good news is that AI-generated videos, deepfakes, face swaps, and lip-synced fakes still tend to leave clues. Some clues are visual. Some live in the audio. Some live outside the frame entirely, in the account that posted the clip, the missing origin story, or the lack of trustworthy provenance data. In other words, the smartest way to spot an AI-generated video is not to hunt for one magical giveaway. It is to look for a pattern of weirdness.

This guide breaks down 11 clear signs that a video may be AI-generated, plus a few practical ways to verify what you are seeing before you believe it, repost it, or send it to five friends with the caption, “No way this is real.”

First, a quick reality check

Not every fake video looks fake, and not every odd-looking video is AI-generated. Compression, bad lighting, shaky cameras, low bandwidth, aggressive filters, and clumsy editing can make a real clip look suspicious. At the same time, modern generative video tools are getting better fast. That means visual clues still matter, but they work best when you combine them with context, source checking, and provenance signals such as watermarks, metadata, or Content Credentials.

Think of this like detective work, not a magic trick. One clue is interesting. Three or four clues start to tell a story.

How to Tell if a Video Is AI-Generated: 11 Clear Signs

1. The lip-sync feels just a little too wrong

One of the oldest and still most useful warning signs is mismatched speech. Watch the mouth closely. Does it open and close at the right moment? Do certain sounds, especially “B,” “P,” “M,” and “F,” match the visible mouth shape? If the audio feels half a beat ahead or behind the lips, something may be off.

This is especially useful in talking-head videos, celebrity clips, fake interviews, and “urgent message” scam videos. AI lip-sync systems can be impressive, but they still struggle with long sentences, fast speech, side angles, or dramatic facial movement. If the voice sounds natural but the mouth looks like it is freelancing, put your skepticism hat on.

2. The face edges look pasted on

Face-swapped videos often get weird around the boundaries of the face. Look at the jawline, cheeks, hairline, ears, and chin. Does the skin tone match the neck and forehead? Do the edges look too soft, too sharp, or slightly blurry compared with the rest of the frame? Sometimes the face appears to float on top of the head like it was attached with digital craft glue.

This kind of seam can show up for only a second or two, especially when the subject turns, laughs, covers part of their face, or moves through uneven lighting. So do not just stare at one frozen frame. Play the clip and watch those edges during movement.

3. The teeth, tongue, or inside of the mouth turn into mush

AI is often great at big shapes and surprisingly flaky at tiny details. Teeth may blur together into one smooth bright patch. The tongue may look oddly flat. The inside of the mouth may seem too dark, too clean, or inconsistent from one frame to the next. In some fake videos, the person looks fine until they smile, and then suddenly their mouth becomes abstract art.

That is because small, fast-moving details are still hard for many systems to render consistently. If the mouth area looks less like human anatomy and more like a haunted marshmallow convention, that is a clue.

4. The eyes blink strangely, stare strangely, or emote strangely

People used to say that deepfakes never blink. That is too simple now. Modern AI videos can blink just fine. But blinking, gaze, and micro-expressions can still feel off. Maybe the subject blinks too little, too much, or at odd times. Maybe both eyes do not move together naturally. Maybe the person smiles, but nothing around the eyes joins the party.

Human faces are full of tiny, coordinated movements. AI can imitate them, but sometimes the performance feels like it came from an actor who memorized “how to be a person” from flashcards. If the expression is emotionally mismatched or eerily flat, that matters.

5. The lighting and shadows do not agree with each other

Light is a terrible liar detector for fake video. Check whether the light source makes sense. Are shadows falling in the same direction? Does one side of the face suggest overhead lighting while the background suggests sunlight from a window? Does the person brighten or darken in ways that do not match the room?

AI-generated videos often stumble when they have to keep lighting consistent across moving faces, clothes, and objects. A polished fake may get most of the scene right, then forget the laws of light halfway through a head turn.

6. Reflections in glasses, eyes, mirrors, or shiny surfaces make no sense

Reflections are where fake videos often become unintentionally comedic. A person wearing glasses may have reflections that change in impossible ways. A mirror in the background may not match the room. Catchlights in the eyes may be oddly shaped, placed differently in each eye, or missing altogether. Jewelry, windows, car doors, and polished tables can also betray a synthetic clip.

Real environments obey geometry. AI often gets close enough to impress you and then trips over the details. If reflections do not match the scene, the video deserves a second look.

7. Text, logos, signs, or tiny design details come out garbled

Want a surprisingly effective test? Pause the video and read the small stuff. Street signs, shirt graphics, product labels, billboards, subtitles inside the video, logos on microphones, and text on screens can reveal a lot. AI-generated video still tends to produce letters that wobble, warp, melt, or quietly turn into alphabet soup.

Even when the main subject looks realistic, background text may be nonsense. Brand marks may be almost correct but not quite. That “CNN” chyron might come out as “CWN” or “CNN-ish but cursed.” Tiny errors like these are not proof on their own, but they are classic synthetic media fingerprints.

8. Background objects flicker, duplicate, bend, or disappear

Sometimes the person looks fine, but the world behind them is having a meltdown. A chair leg changes shape. A passerby vanishes after walking behind a lamppost. A handrail bends. A cup teleports three inches to the left. Background windows, trees, crowds, and furniture can shimmer or mutate from frame to frame.

This happens because AI video generators often prioritize the main subject and handle the rest of the frame with less consistency. So while your eye naturally goes to the face, it is smart to scan the corners, edges, and background motion. Fake videos often unravel there first.

9. Movement physics feel slippery

Real people and real objects move with weight, timing, and physical limits. AI-generated videos sometimes miss that. Hair may float oddly. Earrings may jitter. Fingers may merge during gestures. Shoulders may twist in a way that feels anatomically adventurous. Head turns may look smooth in one frame and rubbery in the next.

This is one of those clues you feel before you can explain it. The motion looks almost real, but not convincingly physical. If your first thought is, “Why does this person move like they were rendered in a dream?” you are not imagining things.

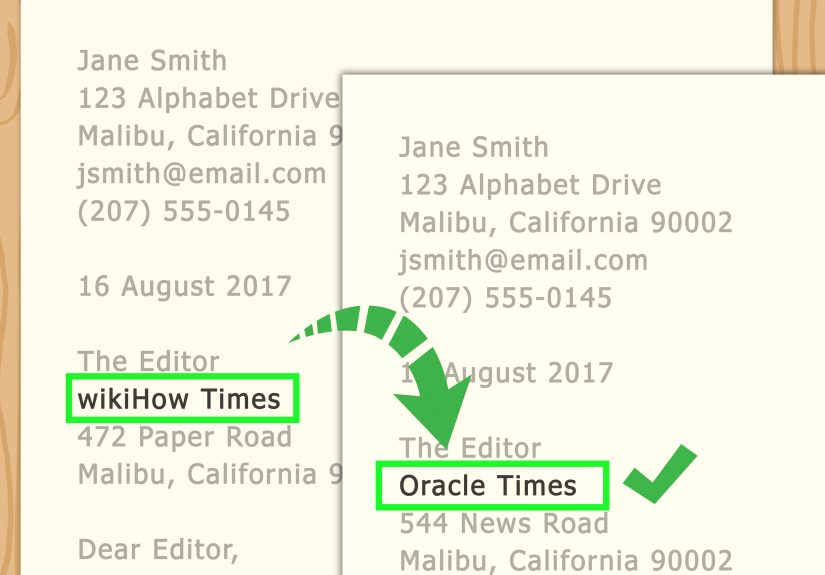

10. There is no trustworthy provenance, watermark, or origin trail

This is a big one. Increasingly, major platforms and AI tools are trying to attach provenance signals to media. These may include visible watermarks, invisible watermarks, metadata, or Content Credentials that show how the file was created or edited. If you have the original file, check whether it contains any authenticity information.

But here is the catch: the absence of provenance does not automatically mean the video is fake. Plenty of real videos have no such data, and many social platforms strip metadata during upload. So treat provenance as a strong positive signal when present, not a perfect lie detector when absent. A video with solid origin information earns trust. A video with no origin trail earns more questions.

11. The context smells wrong, even if the pixels look fine

Sometimes the clearest sign is not in the video itself. It is in the circumstances around it. Did the clip appear on a brand-new account? Is there no original source? Is it making a shocking claim that no reliable news outlet is confirming? Is it designed to trigger instant outrage, fear, or urgency? Is the speaker suddenly saying something wildly out of character or asking for money, passwords, or immediate action?

That matters because many of the most dangerous AI-generated videos are built to manipulate emotion before logic has time to wake up. If a clip seems tailored to make you panic, donate, invest, vote, or repost within 10 seconds, slow down. Scam artists and misinformation peddlers love artificial urgency.

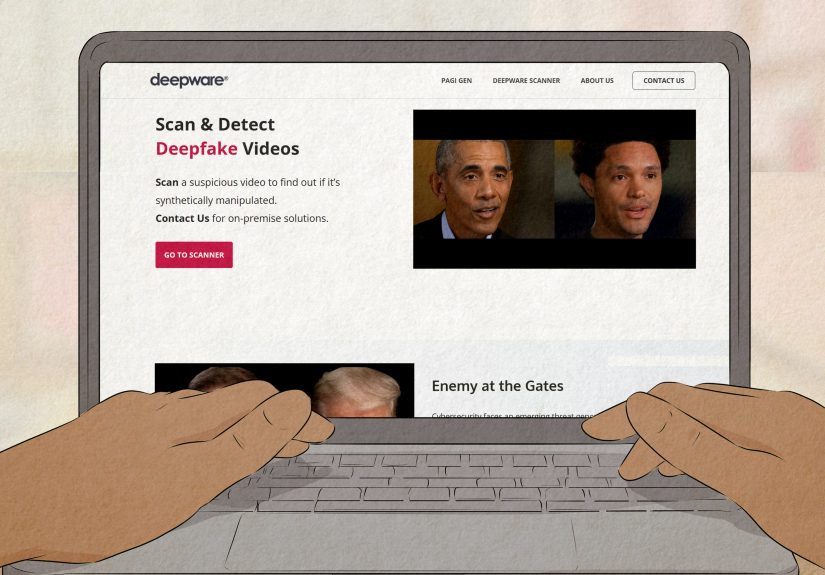

What works better than staring at pixels for 20 minutes?

If you really want to know how to tell if a video is AI-generated, do not stop at visual clues. Use a short verification routine:

- Find the original upload. Do not judge a screen recording of a repost of a repost of a repost. That is how truth goes to die.

- Check the source account. Look at when it was created, what else it posts, and whether it has a real history.

- Look for corroboration. If the video shows a major event, reliable news organizations or official accounts should usually have matching coverage.

- Inspect provenance. If you have the original file, look for Content Credentials, metadata, or verification clues.

- Reverse search key frames. Screenshots can help you trace older versions or reveal that a clip has been altered.

- Trust clusters, not one clue. A single weird blink means very little. Weird blink, warped text, bad reflections, and a sketchy source? That is a different story.

Can you always tell if a video is AI-generated?

No. And that is the part people do not love hearing.

Some AI-generated videos are now realistic enough that average viewers will not reliably spot them with the naked eye. That is why media literacy, source verification, and provenance tools matter so much. The future of spotting deepfakes is not just “look harder.” It is “verify smarter.”

So if you are looking for a perfect checklist that works every time, that checklist does not exist. But a practical checklist absolutely does. And it will save you from a lot of obvious nonsense, plus a fair amount of polished nonsense.

Why this matters more than ever

AI-generated video is not just internet weirdness. It is tied to scams, impersonation, fake endorsements, disinformation, brand fraud, reputational damage, and manipulated political content. A synthetic video can be used to make a public figure seem to say something inflammatory, make a boss appear to request a wire transfer, or make a stranger look like a trusted friend in a crisis message.

That is why learning how to spot an AI-generated video is now part of basic digital self-defense. It is not paranoia. It is modern common sense.

Experience-Based Takeaways: What Spotting AI Video Feels Like in Real Life

If you spend enough time online, you start noticing that suspicious videos rarely fail in just one way. They fail in layers. The first layer is usually emotional. The clip wants you to feel something immediately: shock, fear, rage, awe, pity, urgency, tribal loyalty, or all five before breakfast. That emotional rush is often the first clue. Real footage can absolutely be emotional too, of course, but AI-generated videos made for manipulation usually come in hot. They do not knock. They kick the door down and yell, “Share me now!”

Then comes the second layer: the tiny visual wobble your brain catches before your conscious mind can explain it. Maybe it is the mouth. Maybe it is the way someone’s face stays weirdly smooth while the rest of the frame is noisy. Maybe the speaker turns their head and the ears look like they changed careers mid-scene. You may not know exactly what is wrong, but you feel it. The experience is a lot like hearing a familiar song played slightly out of tune. Close enough to register. Wrong enough to itch.

After that, experienced viewers usually stop staring only at the face. They start roaming. They look at the background. They look at signs, captions, product labels, and reflections. They watch what happens when a hand crosses in front of the body. They replay the clip with the sound off. Then they replay it while staring only at the corners. This is where many fake videos lose their confidence. The main subject may still look convincing, but a window reflection starts improvising, a logo mutates, or a person in the background evaporates like they were never emotionally prepared for existence.

Another common experience is realizing that the video itself is not the only problem. The account posting it is weird too. Maybe it has no real history. Maybe it was created last week. Maybe every post is outrage bait. Maybe the caption says something dramatic, but there is no date, no place, no original upload, and no confirmation from any credible source. That combination is often more revealing than the pixels. A convincing fake wrapped in suspicious context is still suspicious.

People who get better at spotting AI-generated video also tend to get calmer. That might sound odd, but it is true. The more you practice, the less likely you are to fall for the first emotional punch. Instead of reacting instantly, you start asking simple questions: Who posted this first? Is there a full-length version? Are major outlets reporting it? Does the audio match the mouth? Does the text make sense? Is there any provenance data? That shift from reaction to inquiry is the real skill.

And here is the funny part: once you train yourself to look carefully, AI videos can become almost comically obvious. Not all of them, but many of them. You notice the “perfect” influencer clip where the earrings change shape every three seconds. You notice the fake emergency video where the speaker’s teeth blur into a white rectangle. You notice the supposed eyewitness footage where every sign in the background reads like a keyboard fell down the stairs. At that point, the internet starts to look a little less magical and a little more like a stage set held together with digital tape.

The goal is not to become cynical about everything. It is to become precise. You do not need to assume every video is fake. You just need to get good at pausing, observing, and verifying before you let a clip rent space in your brain for free.

Conclusion

So, how can you tell if a video is AI-generated? Start with the obvious clues: lip-sync drift, facial seams, blurry teeth, strange blinking, bad shadows, impossible reflections, garbled text, unstable backgrounds, and slippery movement. Then check what matters even more: provenance, source credibility, and context.

The strongest approach is not blind trust in your eyes or blind trust in software. It is a combination of both, plus a healthy pause before sharing. In a world full of synthetic media, that pause is not old-fashioned. It is powerful.