12 seconds ago 0

This heartfelt feature explores the viral 15-photo love story of an elderly Vietnamese couple whose bond was...

Your Guide to Money & Cash Flow

I Photographed The Love Story Of An Old Vietnamese Couple That Has Been Together Since The 30s (15 Pics)

I Photographed The Love Story Of An Old Vietnamese Couple That Has Been Together Since The 30s (15 Pics)  Podcast: Olympic Gold Medalist on Alcoholism, Recovery, and Redemption

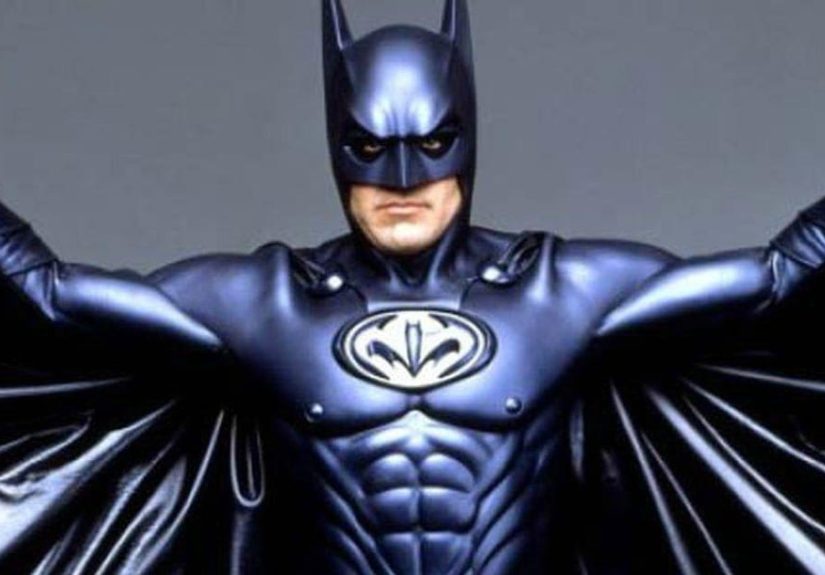

Podcast: Olympic Gold Medalist on Alcoholism, Recovery, and Redemption  Actors Talk About The Roles They Regret Taking

Actors Talk About The Roles They Regret Taking  56 DIY Christmas Wreath Ideas for Every Holiday Style

56 DIY Christmas Wreath Ideas for Every Holiday Style  Retina scan may provide clues to early heart disease – Harvard Health

Retina scan may provide clues to early heart disease – Harvard Health