Table of Contents >> Show >> Hide

- What “Content Integrity” Means to Us

- Our Editorial Standards

- How We Verify Information

- Originality and Anti-Plagiarism Standards

- Transparency: Who We Are, Why We Published, and What Influenced It

- Independence: Keeping Editorial Separate From Ads

- Corrections, Updates, and Version Clarity

- Special Care for “Your Money or Your Life” Topics

- AI-Assisted Content: Allowed Tools, Non-Negotiable Rules

- Reader Experience as an Integrity Issue

- How We Audit and Improve Integrity Over Time

- Conclusion: Integrity Is a Practice, Not a Press Release

- Real-World Experiences That Shaped Our Approach (Extra Insights)

Content integrity is the promise that what you read here is built to be useful, accurate, fair, and transparentnot “optimized” into nonsense, not stitched together from rumors, and definitely not padded with fluff like a couch that’s seen too many movie nights.

We publish for real people with real questions. That means our standards aren’t a one-time checklist; they’re an everyday habit. Below is how we protect editorial integrity across the entire lifecycle of a pieceidea to draft to publish to (when needed) correction.

What “Content Integrity” Means to Us

We define content integrity as the combination of:

- Accuracy: claims match the best available evidence and are presented with proper context.

- Transparency: readers can understand who created the content, why it exists, and what influenced it.

- Originality: we create value, not duplicatesno plagiarism, no “same article, different hat.”

- Accountability: we correct mistakes promptly and clearly, and we learn from them.

- Independence: editorial decisions are not for sale (even if someone offers a very nice fruit basket).

Integrity also means we respect your time. If we can’t improve your understanding, we don’t deserve your scroll.

Our Editorial Standards

Accuracy first, always

Every article starts with a simple question: What are we claiming? We identify key statementsespecially numbers, health claims, financial guidance, safety guidance, and “everyone says” type assertionsand treat them like luggage at the airport: they don’t go through without inspection.

We focus on:

- Specificity: “Studies suggest” becomes “which studies, what did they measure, and who was included?”

- Scope: we avoid overstating results (no turning “may help” into “guaranteed miracle”).

- Context: we explain tradeoffs, limitations, and when advice may not apply.

Fairness, not false balance

Fairness means representing credible viewpoints accurately and proportionally. It does not mean giving equal weight to claims that are unsupported by evidence. If one side has decades of research and the other side has a meme and a vibes-based podcast clip, we won’t pretend they’re tied at halftime.

Clear attribution and sourcing

We prioritize primary sources (official guidance, peer-reviewed research, public records, direct statements) whenever possible. When we rely on secondary reporting or expert commentary, we cross-check it and keep the chain of information intact so the origin doesn’t get lost in a game of “telephone.”

How We Verify Information

Verification is not one stepit’s a workflow. Here’s our typical process for high-stakes or detail-heavy content:

1) Define the reader’s need

We get specific about intent. “Content integrity policy” might mean: How we fact-check? How we handle AI? How we label sponsored content? Each intent changes the structure and what must be explained.

2) Build a claim map

Before we polish a single sentence, we list the claims that require support. Examples:

- “We correct errors promptly.” (Needs a corrections process.)

- “We disclose affiliate relationships.” (Needs a disclosure standard and placement.)

- “We follow E-E-A-T principles.” (Needs author transparency, reputation signals, and accountable updates.)

3) Use a source hierarchy

Not all sources are equal. Our default hierarchy looks like this:

- Primary: government, official regulators, original research, direct interviews, original datasets.

- Authoritative secondary: established standards bodies, major news organizations’ published ethics standards, reputable academic institutions.

- Contextual support: explainers that summarize accurately and add clarityused carefully and cross-checked.

4) Cross-check the “sharp edges”

We pay special attention to:

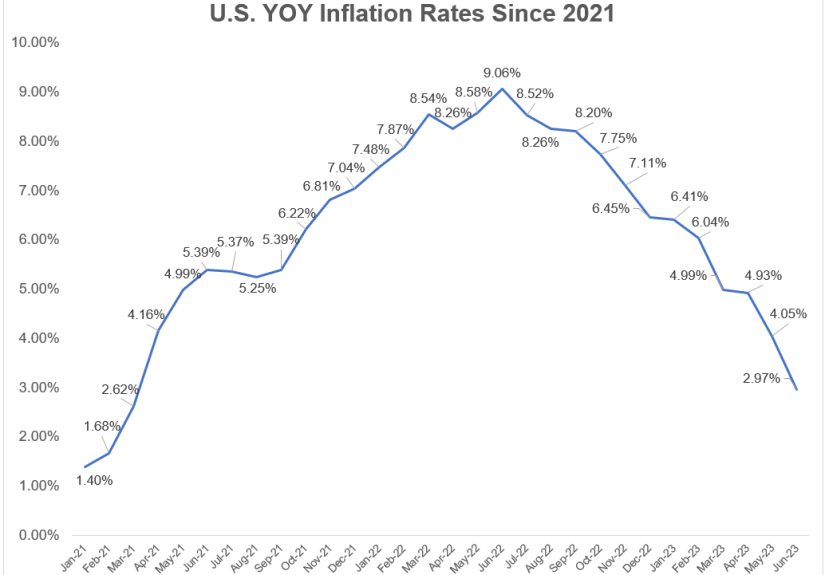

- Numbers: dates, percentages, rates, dosage ranges, price claims, timelines.

- Definitions: terms that are easy to misuse (e.g., “cure,” “toxin,” “clinically proven,” “guaranteed”).

- Comparisons: “best,” “safest,” “most effective,” “works faster,” etc. We qualify or avoid these unless the evidence truly supports them.

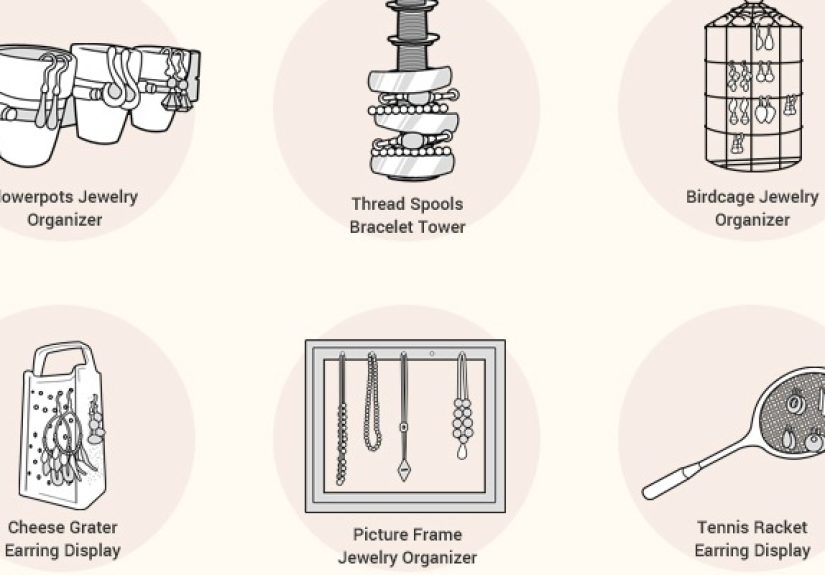

- Images and examples: we avoid misleading visuals and keep examples representative.

5) Editorial review for logic, tone, and completeness

Integrity isn’t only about factsit’s also about reasoning. We review for:

- Logical consistency: no contradictions between sections.

- Reader safety: clear guidance on when to consult a professional for health/safety topics.

- Plain language: we explain jargon, because “approachable” is a feature, not an apology.

Originality and Anti-Plagiarism Standards

We do not copy-paste. We do not “lightly rewrite.” We do not do the content equivalent of changing a hat and calling it a new person.

Instead, we create original value by:

- Synthesizing multiple reputable perspectives into one coherent, reader-friendly explanation.

- Adding structure: decision trees, checklists, “what to do next” guidance, and examples.

- Updating context: what’s changed, what’s stable, and what readers should watch.

- Making it usable: clear headings, short paragraphs, and direct answers without hiding the nuance.

Transparency: Who We Are, Why We Published, and What Influenced It

Author identity and expertise

Readers deserve to know who’s behind the words. We aim for clear author attribution, relevant background, and the editorial checks that shaped the final piece. On complex topics, we rely on subject-matter review or credible references and spell out limitations when we cannot verify something directly.

Ownership, funding, and relationships

Transparency includes business realities. If content includes affiliate recommendations or sponsored relationships, we label it clearly. If a piece is opinion or analysis, we label it accordingly so readers don’t have to play detective.

Independence: Keeping Editorial Separate From Ads

We maintain a strict separation between editorial decisions and business incentives. That includes:

- No pay-for-coverage: we don’t publish favorable editorial content in exchange for payment.

- Clear labeling: sponsored content is labeled, not disguised as reporting.

- Disclosure rules: when compensation, freebies, or material connections exist, disclosures must be clear and near the relevant contentnot hidden like a “Where’s Waldo?” challenge.

We’d rather lose a short-term opportunity than lose long-term trust. Trust is expensive to build and shockingly easy to misplace.

Corrections, Updates, and Version Clarity

Mistakes happen. What matters is what you do next.

Our corrections approach

When we find an error that could mislead a reader, we correct it promptly and clearly. We aim to:

- Fix the error at the source (in the article, chart, or data reference where it appears).

- Explain what changed in plain language when the change is meaningful.

- Match visibility to seriousness: bigger mistake, bigger correction note.

Updates vs. corrections

Not every change is a correction. Sometimes information evolvesguidelines update, prices shift, new research emerges. In those cases, we update content with a clear “last updated” signal and adjust the guidance to reflect what’s currently supported.

Special Care for “Your Money or Your Life” Topics

Some content can meaningfully affect health, finances, safety, or legal decisions. For these topics, our integrity bar is higher because the downside isn’t “mild confusion”it can be harm.

For YMYL content, we emphasize:

- Stronger sourcing: primary references whenever feasible.

- Conservative language: we avoid certainty when the evidence is mixed.

- Decision support: what to ask a professional, what to monitor, what to do in urgent situations.

- Conflict checks: we scrutinize incentives that could bias recommendations.

AI-Assisted Content: Allowed Tools, Non-Negotiable Rules

We treat AI as a toolnot an author, not a source, and definitely not a substitute for verification. AI can help with organization, outlines, readability improvements, and drafting support, but it cannot be the final authority on factual claims.

Our AI ground rules

- No “AI as a source”: if a claim can’t be verified via reputable evidence, it doesn’t go in.

- Human accountability: a human editor owns the final content, including every claim.

- Bias and hallucination checks: we treat AI outputs as drafts that require scrutiny, not answers that require applause.

- Disclosure when appropriate: when AI meaningfully contributes to creation (beyond trivial formatting), we consider transparency practices aligned with reader expectations.

In other words: AI can help carry groceries, but it doesn’t get to drive the car.

Reader Experience as an Integrity Issue

Integrity isn’t only about what we sayit’s also about how we present it. We design for clarity and accessibility:

- Readable structure: descriptive headings, short paragraphs, scannable lists.

- Plain English: we explain terms instead of collecting them like trading cards.

- Accessible formatting: thoughtful use of headings and lists to support screen readers and easy navigation.

- No deceptive UX: we avoid misleading buttons, fake countdowns, or “accidental” subscriptions. Trust shouldn’t require a lawyer.

How We Audit and Improve Integrity Over Time

We treat integrity like a system that can be measured and improvednot a slogan printed on a mug.

Quality checks we use

- Periodic content reviews: especially for evergreen and high-traffic pages.

- Staleness checks: we revisit content where guidance changes quickly.

- Source refresh: older citations and claims get re-verified.

- Reader feedback loop: we take correction requests seriously and respond with the same rigor we use internally.

Signals we aim to earn (not fake)

Search engines and readers both look for signs of trust: clear authorship, transparent policies, credible reputation, and consistent accuracy. We don’t chase these signals with gimmicks; we build them by doing the work.

Conclusion: Integrity Is a Practice, Not a Press Release

We believe content integrity is built through repeatable processes: verify, attribute, disclose, correct, and improve. It’s not glamorous. It’s not viral. It’s the unsexy backbone of trustworthy publishinglike flossing, but for journalism and editorial work.

If you ever spot something that looks wrong, unclear, or outdated, we want to know. Good publishing is a collaboration between writers, editors, experts, and readers. And we’re committed to earning trust the slow way: one accurate sentence at a time.

Real-World Experiences That Shaped Our Approach (Extra Insights)

Policies sound great on paperuntil real publishing happens at real speed. Here are a few experiences that sharpened our approach to content integrity and reminded us why “close enough” is not a standard.

Experience #1: The statistic that looked right… until it didn’t

We once worked on an explainer that referenced a widely repeated statistic from a popular secondary source. It appeared everywhere, quoted confidently, and had that seductive vibe of “surely someone checked this.” During verification, we traced it back through multiple retellings and discovered the original context was narrower than the internet made it sound. The number came from a specific population, in a specific setting, during a specific timeframemeaning it was real, but not universal.

That moment shaped two habits: (1) we chase numbers to their origin, and (2) we don’t just ask “Is this true?”we ask “When is this true, and for whom?” The fix wasn’t dramatic, but it mattered: we updated the language to reflect the scope, added context, and changed the conclusion so readers wouldn’t overgeneralize. The result was less flashy, more accurate, and far more helpfullike swapping fireworks for a flashlight when you’re actually trying to find your keys.

Experience #2: A reader caught what automation missed

Another time, a reader emailed us about a small but important mismatch: a table value didn’t align with the paragraph summary. Nothing was “made up,” but the formatting changes in editing caused one line to drift out of sync. This wasn’t a scandal; it was a spreadsheet-level oops. Still, it could have confused someone who relied on the table for a quick decision.

We corrected it, noted the update, and then improved our workflow: whenever we publish a piece with data tables, we do a final “table-to-text reconciliation” pass. It’s not glamorous work. No one is handing out trophies for “Most Improved Data Consistency.” But it prevents small errors from turning into big misunderstandingsespecially in topics where precision matters.

Experience #3: The affiliate roundup that tried to become a sales pitch

Product content is where integrity gets tested in a very specific way: money. We’ve had drafts where the writing got a little too enthusiasticsuddenly every product was “best-in-class,” “game-changing,” and “life-altering.” (If a spatula changes your life, we support you… but we’re still going to verify the claim.)

So we tightened rules: we avoid absolutes unless evidence supports them, we use consistent evaluation criteria, and we label commercial relationships clearly. If we recommend something, it must be because it fits the reader’s needsnot because it fits a revenue target. The goal is a review that sounds like a smart friendnot a megaphone with a commission link.

Experience #4: AI drafts are fastcorrections are faster

AI can produce clean prose quickly, but it can also produce confident nonsense quickly. We’ve seen drafts that included plausible-sounding but incorrect details: a policy described with the wrong effective date, a medical term used too broadly, or a definition that was “close” but not correct. These weren’t malicious mistakesthey were automation doing what automation does when it isn’t supervised.

That’s why we treat AI as a drafting assistant and require human verification for every meaningful claim. In practice, that means editors must be able to point to evidence for key statements, especially in YMYL content. If something cannot be verified, it gets removed or rewritten as uncertainty. The rule is simple: speed is not an excuse for guessing. Publishing is the part where we stop sounding smart and start being correct.

These experiences reinforced our guiding principle: integrity isn’t a single decisionit’s the sum of small choices made consistently. And yes, it takes longer. But the alternative is faster content that readers can’t trust, which is like building a bridge out of cardboard: it looks impressive right up until someone tries to use it.