Table of Contents >> Show >> Hide

- What antivaccine misinformation looks like today

- The real-world cost: missed protection, preventable outbreaks, eroded trust

- Stop playing myth whack-a-mole

- The five-layer defense that actually reduces antivaccine misinformation

- Layer 1: Individual skills (the “pause before you post” layer)

- Layer 2: Family and friend conversations (keep the relationship, not the dunk)

- Layer 3: Clinician communication (the most trusted messenger layer)

- Layer 4: Community partnerships (trust travels locally)

- Layer 5: Platforms, media, and institutions (reduce amplification of junk)

- Message design: what persuades without preaching

- How to measure progress (beyond likes and retweets)

- Conclusion: build a healthier information environment

- Experiences from the front lines (composite scenarios that mirror real patterns)

- 1) The pediatric visit where the real concern isn’t the rumorit’s feeling dismissed

- 2) The school nurse who becomes the neighborhood help desk

- 3) The public health communicator racing the algorithm

- 4) The multilingual gap where misinformation moves in first

- 5) The turning point: when someone realizes misinformation can be profitable

Some epidemics spread through coughs and sneezes. This one spreads through screenshots, voice notes, and the “just asking questions” cousin who forwards a 3-minute video like it’s a peer-reviewed journal.

Antivaccine misinformation isn’t only a social media problem. It’s a trust problem, a communication problem, and (sometimes) a “we didn’t answer the question fast enough” problem. The good news: there are practical, evidence-informed ways to slow it downwithout turning every conversation into a debate club final.

This article unpacks why vaccine myths catch fire, what they cost communities, and how to respond with strategies that actually work: prebunking (yes, that’s a real thing), better debunking, and trust-building communication that doesn’t rely on shaming people into changing their minds.

What antivaccine misinformation looks like today

Antivaccine misinformation isn’t one single lieit’s an ecosystem. It evolves. It borrows scientific words. It weaponizes uncertainty. And it often comes packaged with emotional hooks that make it feel “true” even when it isn’t.

The greatest hits (and why they won’t retire)

Many antivaccine narratives recycle familiar themes:

- “Vaccines cause autism” (a claim repeatedly investigated and not supported by credible evidence, yet constantly revived).

- “Natural immunity is always better” (ignoring that “natural” infections can carry serious risks).

- “Too many vaccines overload the immune system” (a claim that sounds intuitive, but doesn’t match how immune systems work in real life).

- “The ingredients are toxic” (often relying on scary-sounding chemical names without context or dose).

- “They rushed it, so it’s experimental forever” (turning a moment in time into a permanent status).

What changes is the costume. A rumor might start as a meme, then become a podcast segment, then show up as a confident-sounding paragraph in a parenting forum. By the time a clinician hears it, it’s already been polished by repetition.

Why misinformation spreads faster than facts

If you’ve ever tried to share an accurate vaccine explanation, you know the problem: truth usually arrives carrying a backpack full of nuance. Misinformation arrives riding a unicycle, juggling, and shouting, “DOCTORS HATE THIS ONE WEIRD TRICK!”

Misinformation tends to spread because it’s designed to spread:

- It triggers emotion (fear, anger, disgust, outrage).

- It offers simple explanations for complex events.

- It creates a villain (a company, a government, a “they”).

- It rewards identity (“people like us know the truth”).

- It exploits platform mechanics that elevate engaging content, not necessarily accurate content.

And here’s the tricky part: misinformation often piggybacks on real concerns. People can be worried about side effects, historical mistreatment, healthcare costs, or being dismissed by professionals. Bad actors (and sometimes just confused neighbors) use those concerns as fuel.

The real-world cost: missed protection, preventable outbreaks, eroded trust

Antivaccine misinformation has consequences that don’t stay online. It can delay routine childhood shots, reduce uptake during outbreaks, and turn normal questions into suspicion and hostility.

In communities with pockets of low vaccination coverage, vaccine-preventable diseases can returnespecially highly contagious ones. When vaccine myths circulate widely, schools and local health departments spend time firefighting rumors instead of focusing on prevention, access, and care.

But the biggest cost is harder to measure: trust. Trust is what makes people show up for a checkup, believe a warning, or accept a recommendation when they’re anxious. Once trust gets replaced by “everyone is lying,” even good information bounces off.

Stop playing myth whack-a-mole

A common mistake is responding to misinformation like it’s an endless arcade game: smack one myth down, two more pop up. That approach exhausts communicators and often backfires, because repeating a myth (even to refute it) can accidentally spread it further.

Instead, effective responses use a layered strategy: prebunk when possible, debunk carefully when needed, and build resilience so the next rumor doesn’t land as easily.

Prebunking: vaccinate people against the tactics

Prebunking (also called psychological inoculation) means warning people about the kinds of manipulation they may encounter before they encounter it. You’re not arguing about one claimyou’re teaching pattern recognition.

Examples of prebunking messages that work in real life:

- “If a post makes you feel panicked or furious, pause. That emotional punch is often the point.”

- “Be cautious of videos that say ‘they don’t want you to know’ but don’t cite verifiable sources.”

- “A screenshot of a headline isn’t evidencelook for the original source and context.”

Think of it as teaching people how to spot the methods: cherry-picking, fake experts, conspiracy framing, and misleading anecdotes presented as universal truths.

Debunking: do it without amplifying the myth

Sometimes you have to correct misinformation directlyespecially if it’s spreading fast or causing immediate harm. The key is how you do it.

A simple debunking structure:

- Start with the truth (lead with the accurate statement).

- Warn about misinformation (“A false claim is circulating…”).

- Address briefly (don’t linger on the myth).

- Explain the reasoning (give a clear “why,” not just “no”).

- Repeat the truth (end where you began).

This keeps accurate information more memorable than the rumor itself.

The five-layer defense that actually reduces antivaccine misinformation

No single tactic will solve an “epidemic.” What works is stacking protectionslike public health does with everything else.

Layer 1: Individual skills (the “pause before you post” layer)

People don’t need to become epidemiologists. They need a short checklist:

- Pause before sharing. Emotion is a red flag, not a green light.

- Check the source. Who is it? What’s their track record? Are they selling something?

- Look for confirmation from credible medical or public health organizations.

- Watch for manipulation cues: “secret cure,” “they’re hiding this,” “doctors won’t tell you,” “one weird trick.”

Even small behavior changeslike not sharing unverified claimsreduce spread. That’s not censorship; that’s basic hygiene for the information age.

Layer 2: Family and friend conversations (keep the relationship, not the dunk)

If your goal is to “win,” you’ll probably lose the person. If your goal is to keep the door open, you’re more likely to help them later.

Try scripts that lower defenses:

- “I get why that sounds scary. Want to look at the original source together?”

- “What would you consider a trustworthy place to check this?”

- “If the claim were false, how would we know?”

People often change their minds in private, not on a group thread. Give them an off-ramp that doesn’t require humiliation.

Layer 3: Clinician communication (the most trusted messenger layer)

Healthcare professionals often remain among the most trusted sources of vaccine informationespecially when they communicate with empathy and clarity.

Communication moves that help:

- Use a presumptive recommendation: “Today we’ll do the scheduled vaccines.” (instead of “What do you want to do?”)

- Ask what worries them most (don’t guess).

- Affirm the intention: “You’re trying to keep your child safe.”

- Offer clear, concrete answers and check understanding.

- Keep the door open for future conversations if they aren’t ready today.

This isn’t about flooding people with facts. It’s about making accurate information feel accessible, respectful, and relevant.

Layer 4: Community partnerships (trust travels locally)

Public health messages land best when they come from people a community already trusts: local clinicians, school nurses, faith leaders, coaches, parent groups, and community organizations.

Community-level tactics that work:

- Fill information voids quickly when something changes (new guidance, new outbreak, new school requirements).

- Bring answers to the platforms and places where rumors are spreading (not just a PDF on a website).

- Offer practical support: clinic hours, transportation info, multilingual materials, and reminders.

When access is hard, misinformation becomes an easy explanation. Convenience is a form of confidence-building.

Layer 5: Platforms, media, and institutions (reduce amplification of junk)

Individuals can’t solve a system that rewards sensationalism. Technology platforms and media organizations influence what people see first, what spreads, and what gets repeated.

System-level approaches include:

- Design changes that add friction (prompts before sharing, context labels, limiting algorithmic boosting of repeat offenders).

- Support credible health information in search and recommendationsespecially during outbreaks.

- Protect health professionals and communicators from harassment that drives experts offline.

- Journalism practices that avoid “both-sidesing” settled science and avoid amplifying fringe claims for clicks.

In plain terms: if a platform can recommend you ten videos about air fryers in five minutes, it can also stop recommending misinformation like it’s a hobby.

Message design: what persuades without preaching

Lead with what people care about

Most people don’t wake up thinking, “I’d love to misunderstand immunology today.” They’re thinking about their child, their pregnancy, their aging parents, their job, and the price of groceries.

Frame vaccine conversations around everyday goals:

- Keeping kids in school instead of home sick

- Protecting newborns and older adults

- Avoiding complications that can disrupt work and family life

Use plain language and visual clarity

Replace jargon with clarity. “Rare side effect” beats “adverse event profile.” “Your immune system learns to recognize the germ” beats “antigenic priming.”

Also: make it shareable. If accurate information is harder to share than a rumor, the rumor has a head start.

Be honest about uncertainty (it builds credibility)

People can smell fake certainty from a mile away. Good communicators say what is known, what is being studied, and how recommendations are updated when evidence changes.

That transparency can be the difference between “they keep changing their story” and “they’re updating based on new data.”

How to measure progress (beyond likes and retweets)

If the goal is reducing harm, measure outcomes that matter:

- Are fewer people delaying routine vaccines?

- Are communities asking questions earlier, before rumors spread?

- Do clinics report more productive conversations and fewer hostile encounters?

- Are local vaccination gaps shrinking over time?

Success can look unglamorous: a parent schedules one vaccine today and agrees to talk again next month. That’s not viral contentbut it’s real prevention.

Conclusion: build a healthier information environment

Antivaccine misinformation thrives where fear is high, trust is low, and answers are hard to access. Addressing it means doing more than correcting a few bad posts. It means building a healthier information environmentone that makes accurate information easier to find, easier to understand, and easier to trust.

When we combine prebunking, careful debunking, respectful conversations, community partnership, and smarter systems, misinformation loses one of its superpowers: speed. And when misinformation slows down, truth finally gets a chance to put its shoes on.

Note: This article is for general informational purposes and isn’t medical advice. For personal vaccine questions, talk with a licensed healthcare professional or your local health department.

Experiences from the front lines (composite scenarios that mirror real patterns)

The stories below are compositesbased on commonly reported experiences from clinicians, public health workers, and educators. Details are blended and anonymized to protect privacy while highlighting what these conversations often look like.

1) The pediatric visit where the real concern isn’t the rumorit’s feeling dismissed

A parent arrives with a phone full of tabs: “toxins,” “immune overload,” a video titled “What they aren’t telling you.” The clinician could respond with a rapid-fire fact dump. Instead, they ask one question: “What worries you the most?”

The answer isn’t a specific ingredient. It’s fear of being a “bad parent” if something goes wrong. The clinician names that fear out loudwithout endorsing the misinformationand explains the vaccine schedule in plain language. They lead with the truth, avoid repeating every rumor, and offer a simple plan: “We can do the recommended vaccines today. If you want, I’ll also give you a one-page summary from a pediatric source, and we can revisit any questions next visit.”

The parent doesn’t transform into a vaccine evangelist on the spot. But they agree to vaccinate today and keep talking. That’s a win that doesn’t trend.

2) The school nurse who becomes the neighborhood help desk

When a rumor hits“the school is secretly forcing experimental shots”the nurse’s inbox fills up. The best move isn’t arguing in the comments. It’s filling the information void quickly with a short, clear message: what’s required, what’s recommended, what exemptions mean, and where families can get reliable answers.

The nurse teams up with a local clinic to host a short Q&A night. The format matters: fewer slides, more listening. Parents ask practical questions (cost, side effects, timing) alongside misinformation-driven questions (autism, fertility, DNA). The nurse answers calmly, repeats the core truths, and shares next steps: where to get vaccinated, how to access records, and how to speak with a clinician privately.

Rumors don’t vanish, but the school community gets an alternative: a trusted person who responds quickly and respectfully.

3) The public health communicator racing the algorithm

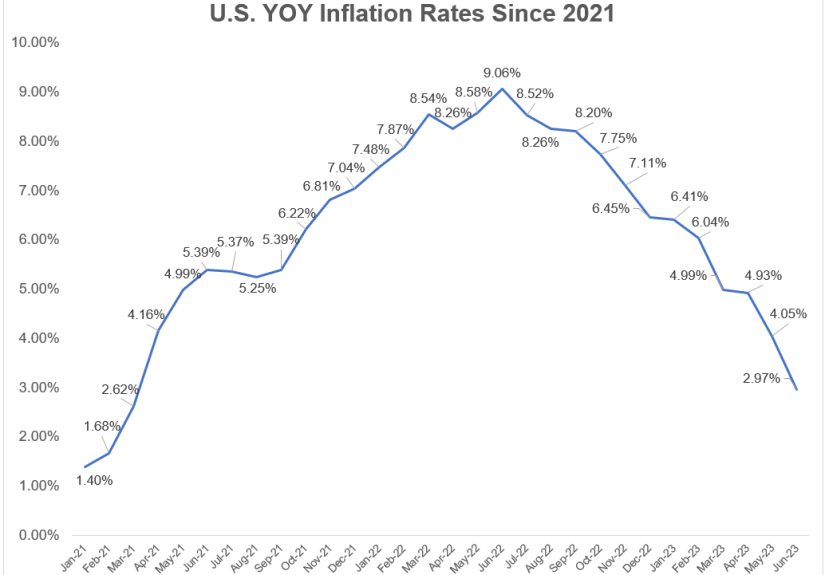

A local health department notices a spike in a claim spreading on the same platform: a cropped chart implying vaccines “cause” a condition. The communicator doesn’t post a 900-word rebuttal that no one shares. They create a short “before you share” graphic: “This chart is missing context. Here are the key facts. Here’s what the full data show. Here’s where to verify.”

They also recruit trusted messengers: a local family doctor, a community leader, a pharmacist who’s known by name. The posts use the same channels as the rumorbecause correcting misinformation in a place nobody visits is like installing a smoke alarm in a shed while the kitchen is on fire.

4) The multilingual gap where misinformation moves in first

In some communities, official information arrives lateor not at allin the languages people use at home. In that gap, translated misinformation fills the space fast. A community organization steps in with culturally relevant messaging: short videos, voice notes, and live sessions where people can ask questions without embarrassment.

What changes minds here isn’t a single “gotcha” fact. It’s respect, repetition, and access: “We’ll answer your question. We’ll show you where the information comes from. And we’ll help you get an appointment if you choose to vaccinate.”

5) The turning point: when someone realizes misinformation can be profitable

A young adult notices a pattern: the loudest antivaccine accounts are always selling somethingsupplements, memberships, “detox” kits, exclusive content. Once they see that incentive, their relationship with the content shifts. They start asking: “Who benefits if I believe this?”

That’s media literacy doing its job. It doesn’t require blind trust in institutions; it requires healthy skepticism in all directionsincluding toward people who monetize fear.

Across these experiences, the common thread is simple: people move toward trust when they feel heard, when answers are easy to access, and when accurate information is delivered by messengers who respect them. Addressing antivaccine misinformation isn’t only about correcting claimsit’s about rebuilding the conditions where truth can compete.